Insight

Research

Papers and technical reports from the RLWRLD team.

-

Cog3DMap: Multi-View Vision-Language Reasoning with 3D Cognitive Maps

Cog3DMap constructs an explicit 3D cognitive map from multi-view images, grounding each token in 3D space with both semantic and geometric information, enabling MLLMs to directly reason over spatially structured representations for SOTA spa…

-

RoboAlign: Learning Test-Time Reasoning for Language-Action Alignment in Vision-Language-Action Models

RoboAlign uses RL-based reasoning alignment to bridge the language-action modality gap in VLAs, achieving up to 106.6% improvement over SFT baselines on real-world robotics tasks with less than 1% additional data.

-

SpatialBoost: Enhancing Visual Representation through Language-Guided Reasoning

SpatialBoost enhances the visual representation through 3D information with linguistic format.

-

RoboCurate: Harnessing Diversity with Action-Verified Neural Trajectory for Robot Learning

Neural trajectory curation framework that diversifies synthetic robot data via controllable video generation (I2I/V2V) and filters low-quality samples by comparing motion consistency between generated video and simulator replay

-

Affostruction: 3D Affordance Grounding with Generative Reconstruction

Generative reconstruction to complete occluded regions and ground affordances on full 3D shapes

-

Planning in 8 Tokens: A Compact Discrete Tokenizer for Latent World Model

CompACT compresses each observation into just 8 discrete tokens, enabling orders-of-magnitude faster planning in latent world models

-

MoGaF: Space-Time Forecasting of Dynamic Scenes with Motion-aware Gaussian Grouping

Long-term stable scene forecasting via motion-aware Gaussian grouping

-

Improving Text-to-Image Generation with Intrinsic Self-Confi dence Rewards

Post-training T2I generators with the model's own self-confidence as reward, improving compositionality and text-image alignment without external reward models

-

Dexterous World Models

Proposes DWM (Dexterous World Model), a video diffusion framework that generates temporally coherent human-scene interaction videos by conditioning on static 3D scene renderings and egocentric hand mesh sequences.

-

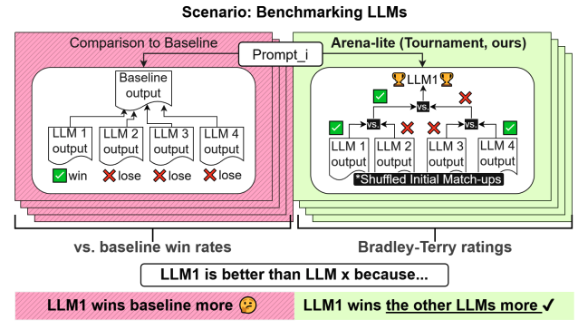

Arena-Lite: Efficient and Reliable Large Language Model Evaluation via Tournament-Based Direct Comparisons

Arena-Lite introduces a tournament-based direct comparison approach for LLM evaluation that eliminates the need for baseline outputs. By using head-to-head comparisons between systems, it achieves higher reliability with fewer comparisons t…

-

DUAL-STREAM DIFFUSION FOR WORLD-MODEL AUGMENTED VISION-LANGUAGE-ACTION MODEL

Proposes DUST (DUal-STream diffusion), a dual-stream architecture that separately processes vision and action modalities with asynchronous sampling for world modeling.

-

VERIFIER-FREE TEST-TIME SAMPLING FOR VISION LANGUAGE ACTION MODELS

Introduces MG-Select, a training-free test-time scaling method that uses KL divergence from masked reference distribution as confidence metric for action selection.

-

CONTEXTVLA: VISION-LANGUAGE-ACTION MODEL WITH AMORTIZED MULTI-FRAME CONTEXT

Proposes ContextVLA, which compresses multi-frame temporal context into a single token for efficient processing without computational overhead.

-

CONTRASTIVE REPRESENTATION REGULARIZATION FOR VISION-LANGUAGE-ACTION MODELS

Introduces RS-CL (Robot State-aware Contrastive Loss) that aligns VLA representations with proprioceptive states using relative distances as soft supervision.

-

HAMLET: SWITCH YOUR VISION-LANGUAGEACTION MODEL INTO A HISTORY-AWARE POLICY

Introduces RS-CL (Robot State-aware Contrastive Loss) that aligns VLA representations with proprioceptive states using relative distances as soft supervision.

-

ALLEX: Where RLWRLD’s Potential Unfolds

In collaboration with RLWRLD, WIRobotics has unveiled ALLEX, a humanoid robot engineered for safe human–robot collaboration and remarkable hand dexterity. The true intelligence from RLWRLD on ALLEX will be unveiled this fall. To meet ALLEX'…

-

Combinative Matching for Geometric Shape Assembly

The proposed approach significantly reduces local ambiguities in matching by explicitly modeling both identical surface shapes and opposite volume occupancy, enabling more accurate correspondences and ultimately allowing a robust combinatio…

-

A Unified Framework for Motion Reasoning and Generation in Human Interaction

To address these challenges, we introduce VIM, the Versatile Interactive Motion-language model, which integrates both language and motion modalities to effectively understand, generate, and control interactive motions in multi-turn conversa…

-

Affogato: Learning Open-Vocabulary Affordance Grounding with Automated Data Generation at Scale

Affordance grounding-localizing object regions based on natural language descriptions of interactions-is a critical challenge for enabling intelligent agents to understand and interact with their environments.

-

Robot-R1: Reinforcement Learning for Enhanced Embodied Reasoning in Robotics

ROBOT-R1 learns to predict the next keypoint state required for task completion, conditioned on the current scene image and environment metadata derived from expert demonstrations.

-

Learning Multi-frame and Monocular Prior for Estimating Geometry in Dynamic Scenes

We present a new model, coined MMP, to estimate the geometry in a feed-forward manner, which produces a dynamic pointmap representation that evolves over multiple frames. Specifically, based on the recent Siamese architecture, we introduce …

-

Target-Aware Video Diffusion Models

Given an input image, our target-aware video diffusion model generates a video in whichan actor accurately interacts with the target, specified with its segmentation mask.

-

Learning 3D Object Spatial Relationships from Pre-trained 2D Diffusion Models

Given a textual description of the spatial relationship between two objects, our method models OOR, representing their relative poses and scales according to the text. We obtain OOR samples using off-the-shelf models and a proposed mesh reg…